Artificial intelligence is transforming the way we interact with technology, but it also raises an urgent question: is it being inclusive? This article explores how AI can boost digital accessibility – from automatically generating subtitles to detecting barriers on websites – while also exploring the risks of exclusion if it is not designed with everyone in mind. We look at how tools like ChatGPT, Gemini, and Claude address accessibility, and reflect on the role AI will play in creating a truly universal future.

We live in a time when technology based on Artificial Intelligence (AI) is no longer just a “cool” extra in digital projects, but a key driver for innovation. But when we apply this AI to the field of web accessibility, the question is twofold: how can AI improve accessibility for people with disabilities? and are the AI solutions themselves designed so that these people can actually use them?

This article seeks to explore both sides: the promising and the challenging.

How is AI enhancing digital accessibility?

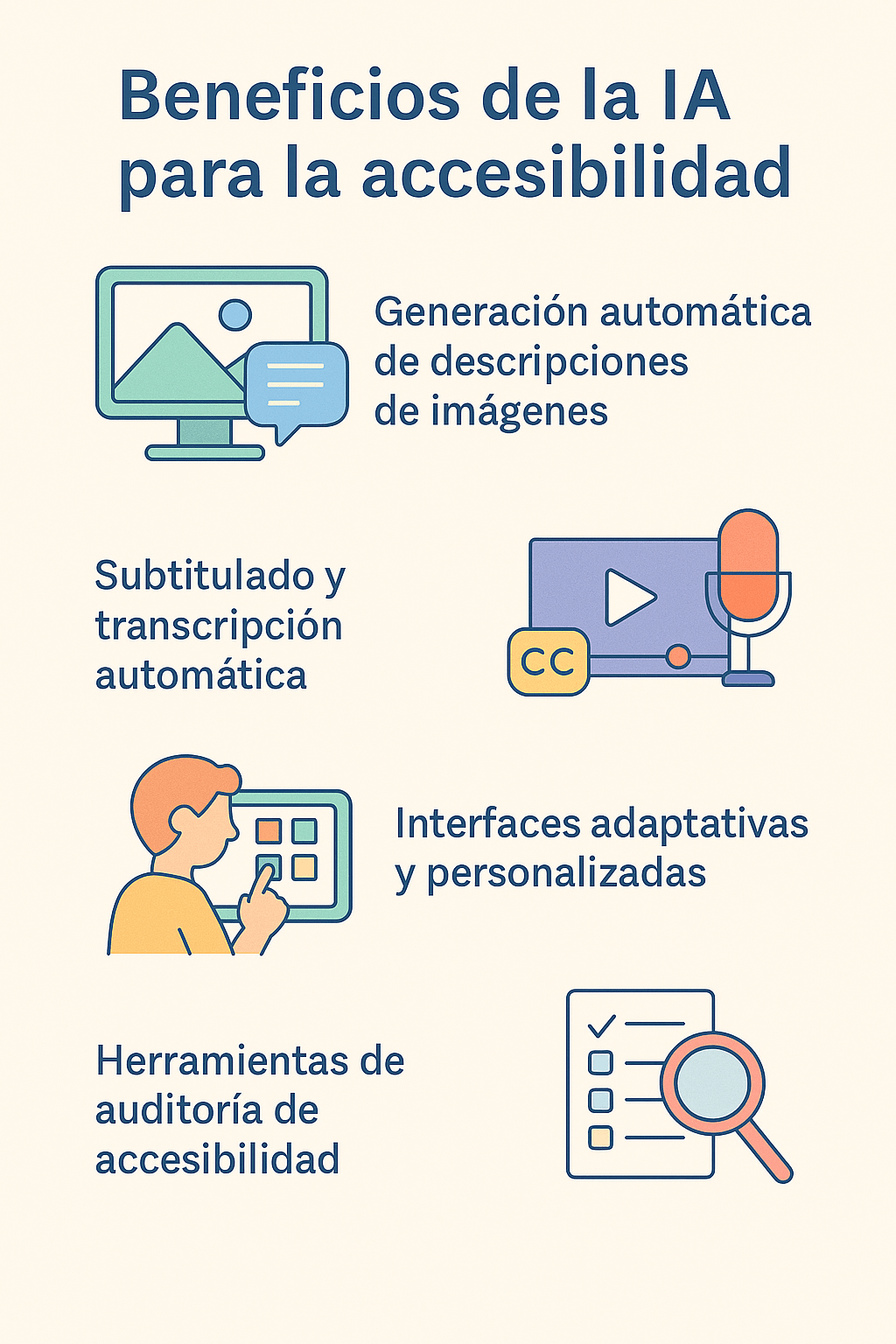

AI is transforming the way digital content is generated, adapted and delivered, opening up new opportunities for accessibility. Here are some concrete examples:

-

Automatic generation of image descriptions (alt text) using computer vision: allows visually impaired people to obtain explained visual information.

-

Automatic audio and video subtitling and transcription, and real-time translation: improves access for people who are deaf or hard of hearing.

-

Adaptive interfaces that learn the user’s motor, cognitive or sensory preferences.

-

Automatic accessibility audit tools (based on AI) that detect problems in web pages, such as contrast, semantic structure, complex texts, etc.

-

Development assistants who help the technical team incorporate good accessibility practices from early stages.

These advances allow accessibility to stop being just a final “correction” and become integrated in an earlier, scalable and automatic way.

The risks: when AI is not designed to all

The risks: when AI is not designed to all

However, AI is not an automatic panacea. There are a series of real risks that we must consider:

-

If AI models have not been trained with diverse users (people with disabilities, different types of disabilities, different cultural contexts), they can incorporate biases, make certain needs invisible or generate erroneous results.

-

Some “automatic” solutions promise accessibility but fail in context or quality. An example: generating incorrect or confusing image descriptions for blind people.

-

Accessibility does not only depend on technology: it also requires design, structure, interaction, understandable content, compatibility with technical aids. If AI generates content or interfaces without these bases, the gap can worsen.

-

AI-based automated audit tools can detect many errors, but not all (e.g. usability issues, context, real user experience). Human supervision is necessary.

Therefore: AI can expand inclusion, but it can also expand exclusion if it is not designed, implemented and monitored with accessibility criteria.

Are great AIs designed for people with disabilities?

Let’s see in summary how some AI platforms are facing this challenge and what challenges remain open.

-

ChatGPT (from OpenAI): Its web interface and mobile apps allow use with screen readers and already has voice integration in some environments. Even so, the generation of images (or visual interfaces) presents description and usability challenges for people with profound visual disabilities.

-

Gemini (from Google): Has announced interesting accessibility improvements (for example integration with vision and image description tools on Android) that benefit people with visual disabilities. However, general use, especially in web versions, may still present barriers.

-

Claude (from Anthropic): Focuses on generating clear language, which is positive for cognitive accessibility. But the challenge remains in the interface, in the dialogue structure, in the complete compatibility with supporting technologies.

Provisional conclusion: yes, these platforms are “getting closer” to the challenge of accessibility, but we still could not say that they are completely designed and optimized for all types of disabilities. There are differences between “being able to use” and “using with full autonomy and comfort.”

Best practices for integrating AI and accessibility in web projects

From TuWebAccesible, with clients such as companies that have accessibility requirements (e.g. audits, certifications), it is useful to make some recommendations:

-

Integrate accessibility from the AI design: when AI is used for content, interfaces or adaptations, think about functional diversity from the beginning (people with visual, hearing, motor, cognitive disabilities).

-

Check compatibility with technical aids: screen readers, magnifiers, keyboards, alternative input devices, etc.

-

Human supervision and real testing with disabled users: AI can automate but not replace real user experience.

-

Transparent AI limitations: if the system generates automatic descriptions, alert of possible errors or allow the user to review/modify.

-

Accessibility audit + AI: combine automatic tools (AI) with manual accessibility audits to ensure compliance with standards (such as W3C WAI / WCAG 2.2) and real usability.

-

Technical team training: Developers and designers must understand accessibility even when using “AI plugins” or automatic generators. For example, AI is required to not only generate code, but to generate accessible code.

Looking to the future: a vision 2025-2030

Thinking about the broader horizon:

-

AI could be incorporated as accessible co-pilot in the generation of content, interfaces, personalized adaptations for each user according to their functional profile.

-

AI models could be integrated with accessibility regulations automatically (for example, when generating an interface, the model evaluates its compliance with WCAG/EN 301 549, etc.).

-

AI tools that predict accessibility barriers before the user experiences them, and offer automatic adaptations (for example flow change, cognitive simplification, greater contrast, etc.).

-

The risk will remain that digital inequality will widen if these technologies remain out of reach of people with low resources or who do not have compatible devices.

The key phrase: accessibility is not an extra; In the world of AI, it becomes critical “proof” that technology serves all people, not just some.

Artificial intelligence opens an immense horizon for digital accessibility, but only if it is designed and implemented with accessibility as a central axis, not as an add-on. In your work as Director of TuWebAccesible, this means accompanying organizations to incorporate accessible AI, audit their implementations and guarantee that no person is excluded in the digital transformation.

“Accessibility is not a collateral benefit of artificial intelligence. It is its definitive proof of humanity.”